Swallowing the elephant (postscript: reader emails)

It was due to a mixture of intention and laziness that I didn’t add the ability for people to comment on blog posts here. In general, I’m a believer in the adage “never read the comments section”, though I’d like to believe that the sort of folks that end up here would have plenty of insightful things to say. However, I’m finding myself increasingly old-school in how I want the Internet to be: static pages with no ads and no JavaScript like it’s 1997, so I liked the idea of doing my part here.

Thus, “writing an email” is the activation energy required to comment on things posted here. Even with that friction, a number of people sent me insightful emails after I posted the “Swallowing the Elephant” series on rendering the Moana island scene with pbrt; many thanks to everyone who took the time to write. Today, we’ll dig into a few of those emails, enjoy another parsing speedup, and learn a few things along the way.

Parsing floats, revisited

When we ended before, roughly 20% of scene parsing time was spent turning floats-as-text into floats-in-memory. That felt like a lot, but it wasn’t clear to me what do to about it.

Fortunately, both Jon Harper and Алексей Аверченко pointed me at a nice

little library,

double-conversion, that

Google has released. Both of them told me that it was state-of-the-art for

efficiently and accurately printing floats and both suggested that it would

also likely be good at parsing them. I did a few benchmarks and found that

they were right: it was between 1.5x and 2x faster than strtof() on the

system I was using. Nice!

It was easy enough to use that for the float parsing code in pbrt-next, the branch I use for hacking on new ideas. With fingers crossed, I ran some benchmarks. Previously, pbrt-next spent 11m 24s parsing the Moana island scene. When using double-conversion, parsing time dropped to 9m 18s, a 1.2x speedup.

That’s delightful, though it doesn’t quite fit with the earlier measurements,

which had 20% of startup time spent in strtof(). If 20% of time is spent

parsing floats, doubling the performance of float parsing should give

roughly a 1.1x speedup, so something’s off somewhere. Forgive me, but I’m

going to be lazy here and not chase down exactly what happened; when you

get a greater than expected speedup, it can be hard to muster the

motivation to chase down where your earlier measurements were off. tl;dr,

double-conversion is fast.

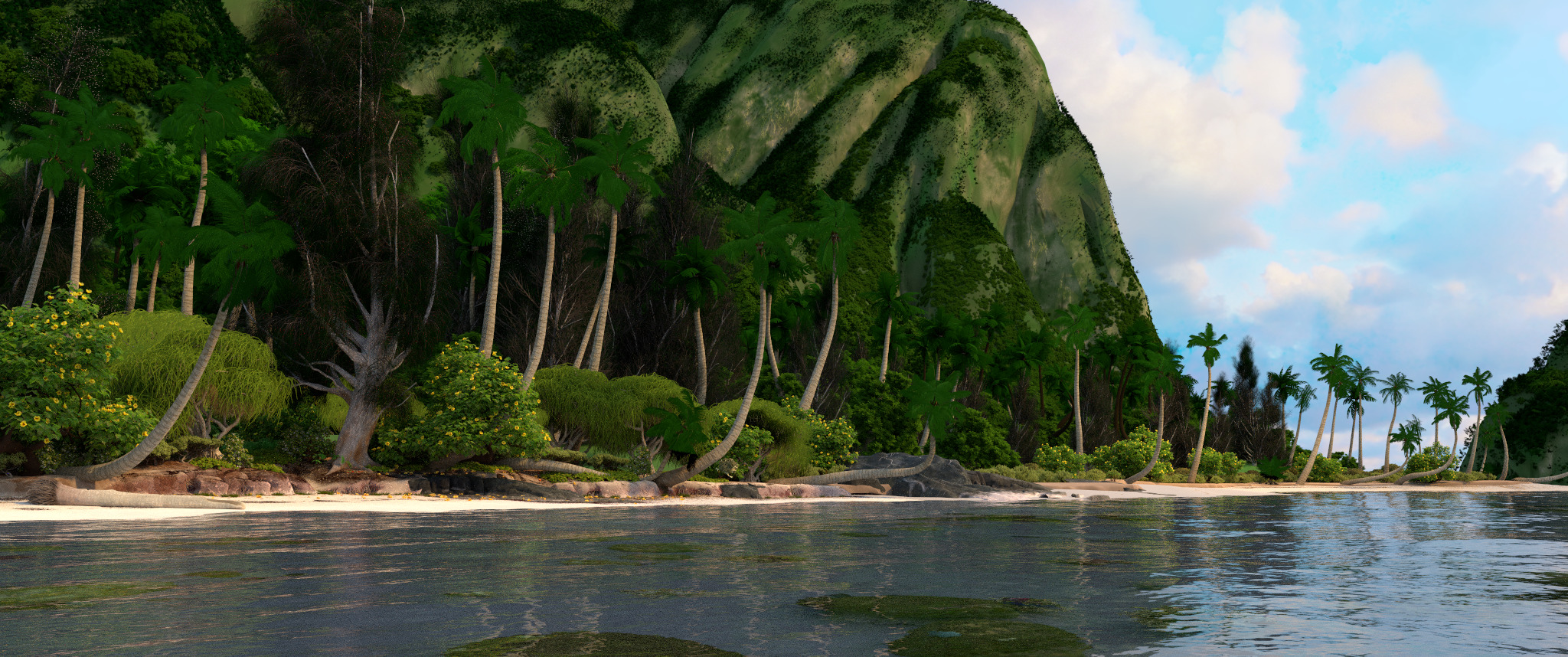

Allow me to distract you from my lack of benchmarking rigor with another rendering:

Moana island, closer in, rendered with pbrt at 2048x858 resolution with

256 samples per pixel. Rendering time with a 96 vCPU Google Compute

Engine instance at 2 GHz was just 34m 32s.

Moana island, closer in, rendered with pbrt at 2048x858 resolution with

256 samples per pixel. Rendering time with a 96 vCPU Google Compute

Engine instance at 2 GHz was just 34m 32s.

Printing floats, revisited

Ever since I read Bruce Dawson’s nice blog post about accurately printing

floats,

I’ve been nuts about using %.9g with printf when printing

floating-point values. The idea of printing out a truncated number that

doesn’t capture the full precision of a floating-point value drives me

bananas; in what world does it make sense for default behavior for %f to

be to throw away precision?

Anyway, %.9g works well enough, but it has one shortcoming: it gives a

sufficiently precise value to be able to accurately reconstruct the

original floating-point value, but not necessarily the shortest value that

will do that. Consider the real number 0.2: it can’t be exactly

represented in base-2 floating-point. With 32-bit floats, the closest

representable value to 0.2 is 0.20000000298023223876953125. In

turn, if you print that using %.9g, you get 0.200000003. That is

indeed enough precision so that parsing it gets you back to the exact

value as before, but it’s a little messy—all those zeros and then a bit

of schmutz, more characters than you started out with.

I thought that discrepancy might cause some trouble when converting the

Moana island scene to use PLY

meshes.

When pbrt is run with the --toply option, it parses the scene

description, writes out PLY files for big meshes, and then prints the

remainder of the scene description to standard output. I made sure to use

%.9g for all of that printing in order to be sure that the new scene

description was completely unchanged by the conversion process (every bit

is sacred and all that).

Aesthetic issues of numbers like 0.200000003 in the output aside, I

wondered whether the files were significantly larger than they needed to be

due to carrying along more digits than were actually needed. I didn’t know

what to do about that until I checked out

double-conversion’s

routines for printing floats and doubles; they include

ToShortestSingle(),

which prints a float with just enough precision to reconstruct its

floating point value. In turn, that prints the rounded float value of

0.2 as 0.2.

I changed pbrt to use ToShortestSingle() rather than %.9g and

re-converted the scene to PLY format, with high hopes that the residual

pbrt files would be significantly smaller and that in turn, parsing time

would be further reduced thanks to having less stuff to get through.

Unfortunately, the scene files were only 2.5% smaller; a nice little

something, but not enough to materially affect startup time.

Tagged pointers and friends

Last time, when discussing additional ways to further reduce memory use, I suggested using unused bits in pointers for type identification and then manually performing virtual function calls using those type indexes as a way to save all the storage of vtable pointers. Many people wrote with information about prior applications of this idea; it’s definitely a thing, especially in modern dynamically-typed languages.

Juho Snellman pointed me to a blog post he wrote about the history of the representation of numbers in Lisp, which is full of great history on this topic. He also noted:

Alternatively, since you’re already considering arena allocation for these objects, it seems like some form of range-based pointer tagging (e.g. BIBOP) could work well for. The core idea is that you reserve each X byte block (e.g. 1MB) in the arena for just one datatype. The block->(vtable for all objects in the block) mapping is kept on the side. With properly aligned blocks, going from a pointer to an index in the vtable vector can be just a couple of bit operations.

Steele’s Data Representations in PDP-10 MacLISP seems to be the origin of the range-based pointer tagging idea. This idea seems particularly appealing in a world of massive 64-bit address spaces: map a single huge range of virtual memory for triangles, say, let the virtual memory system allocate physical pages only when needed, and then do a quick check to see if a given pointer is in that range to know if it’s a pointer to a triangle.

Going back to the original idea, number of libraries make it easy to encode type information directly in pointers and even offer a measure of type safety when doing so. Bart Schuurmans pointed me at LLVM’s PointerSumType, which uses the low bits of a pointer for type identification. The caller must provide sufficiently aligned pointers to leave enough bits for type identification, which is arguably a more portable approach than stealing currently-unused high bits from 64-bit pointers. (Juho also noted that using the low bits is likely to lead to more efficient code on x86 than using the high bits.) Bryan Catanzaro pointed me at DiscriminatedPtr in Facebook’s folly library, which uses the high bits, which is preferable if the number of distinct types to be represented is more than a small handful.

My earlier assumption had been that given a type index, the best way to

dynamic dispatch would be to do an indirect function call through a table

of function pointers. Sebastian Sylvan

wrote a nice email arguing that using a switch statement or explicit

ifs based on the type could actually be a better approach, especially if

there are not too many different types (as is the case here).

Consider something like:

int type = /* extract the bits from the pointer */;

if (type == TRIANGLE)

return RayTriangleIntersect(...);

else if (type == CURVE)

return RayCurveIntersect(...);

else

return shapeVTables[type]->Intersect(...);

The common cases—triangles and curves—are checked first and those if

tests would turn into direct branches that likely be very well-predicted

(by which I mean “it’s probably a triangle”). Further, the code for those

cases could even be inlined if we wanted; that gives us now two things that

might give better performance than a virtual function call. For a

situation like this, where there are a very small number of commonly-used

cases and then a long-ish tail of more rare ones, this implementation

approach is potentially appealing from a performance perspective—it’s not

necessarily just about saving memory by getting rid of vtable pointers.

Having two good reasons for adopting this approach (memory use and performance) now has me conflicted about how to proceed with respect to pbrt. pbrt’s two main reasons for existence are to teach people how physically based rendering works through code and to show the implementation of a reasonably well-written ray tracer.

Is this a technique that’s important enough for renderer implementation that we should include it in the book in the interests of pedagogical completeness, or is it too much added complexity for the student trying to get a handle on the basics of ray tracing in the first place? I can’t decide, but at least there’s some time to figure it out before a new version of the book is due. Onward to hacking up an implementation and seeing how it feels.